The enterprise software landscape is filled with closed systems — ERPs without APIs, legacy CRMs, property management tools, and vertical SaaS that never anticipated being accessed programmatically. Yet every one of these systems communicates over HTTP, meaning the API already exists — it's just not exposed.

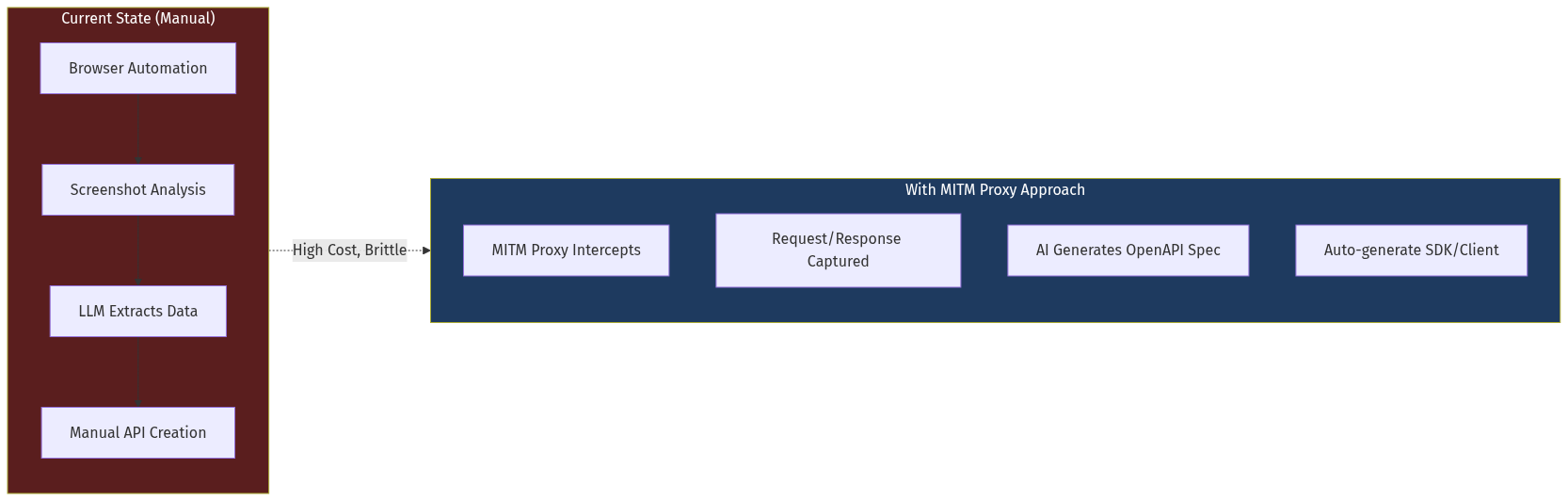

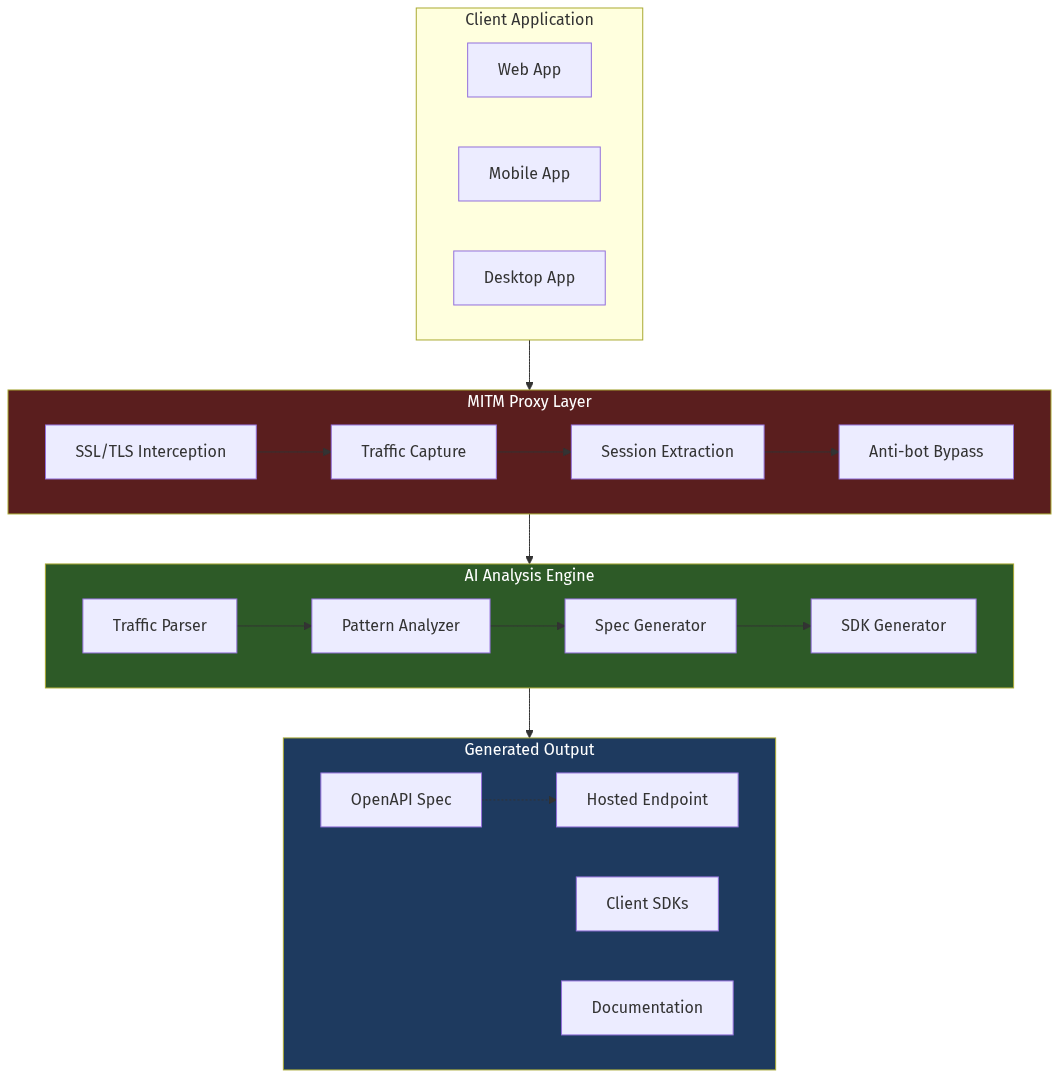

A new category of startups is emerging to solve this problem: API Extraction as a Service (AEaaS). Using MITM (Man-in-the-Middle) proxy technology combined with LLMs that analyze request/response patterns, these companies can reverse-engineer any web or mobile application into a documented, usable API — in hours, not weeks.

The market opportunity is massive: every company with legacy software is a potential customer. The data moat is compounding — each extraction builds a library of API specs that can be reused or sold. And the timing is perfect: AI agents need to interact with these systems, but they can't click buttons or read screens the way humans do.