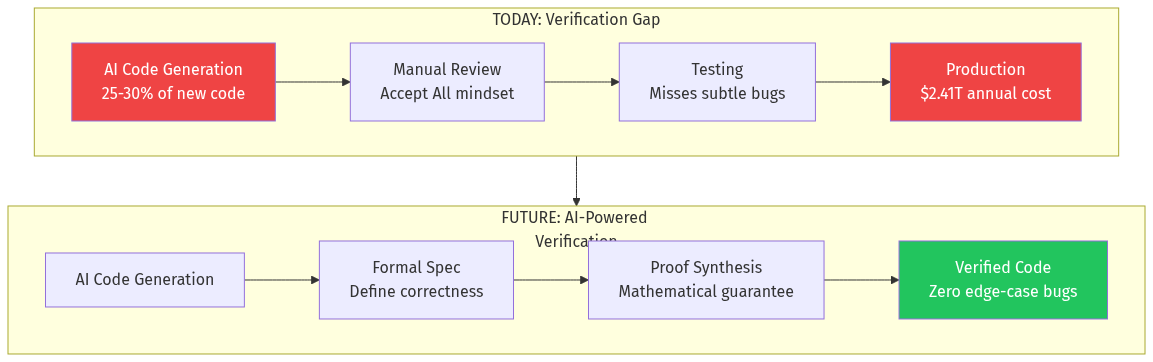

AI is rewriting the world's software at unprecedented speed. Google and Microsoft report 25-30% of new code is AI-generated. Microsoft's CTO predicts 95% by 2030. Anthropic built a 100,000-line C compiler in two weeks for under $20,000.

But here's the problem nobody's solving: Who verifies all this code?

Veracode's 2025 report found 45% of AI-generated code fails basic security tests. Java hit a 72% failure rate. Newer, larger models don't generate more secure code—they just generate more insecure code faster.

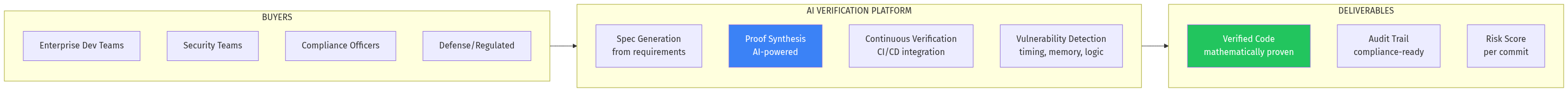

The market for AI code verification is emerging NOW, and whoever builds the "Datadog for AI code correctness" will capture a multi-billion dollar market.