This is not a horizontal HR tech play. This is vertical B2B intelligence for the hiring workflow.

AIM Alignment

High-friction, high-trust transaction

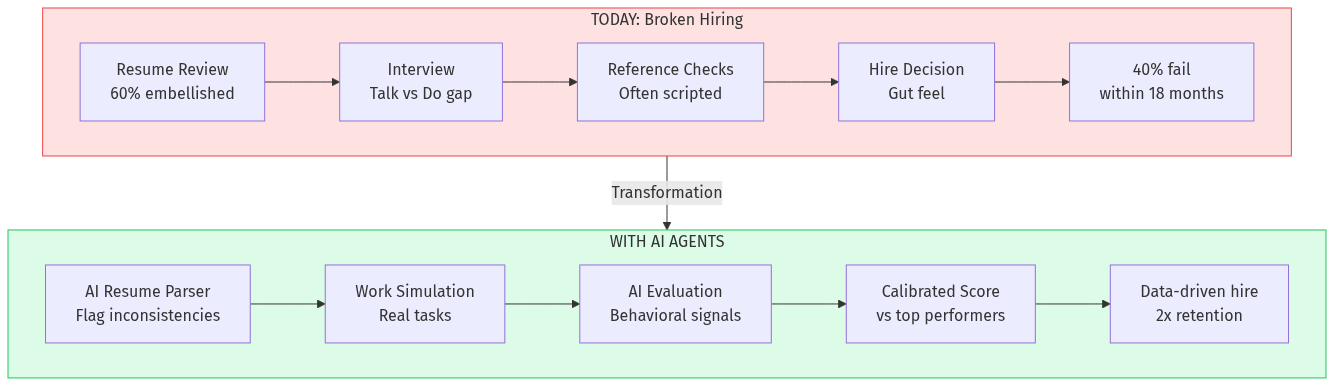

- Hiring is a $50K+ decision made with inadequate data

- Same pattern as industrial procurement

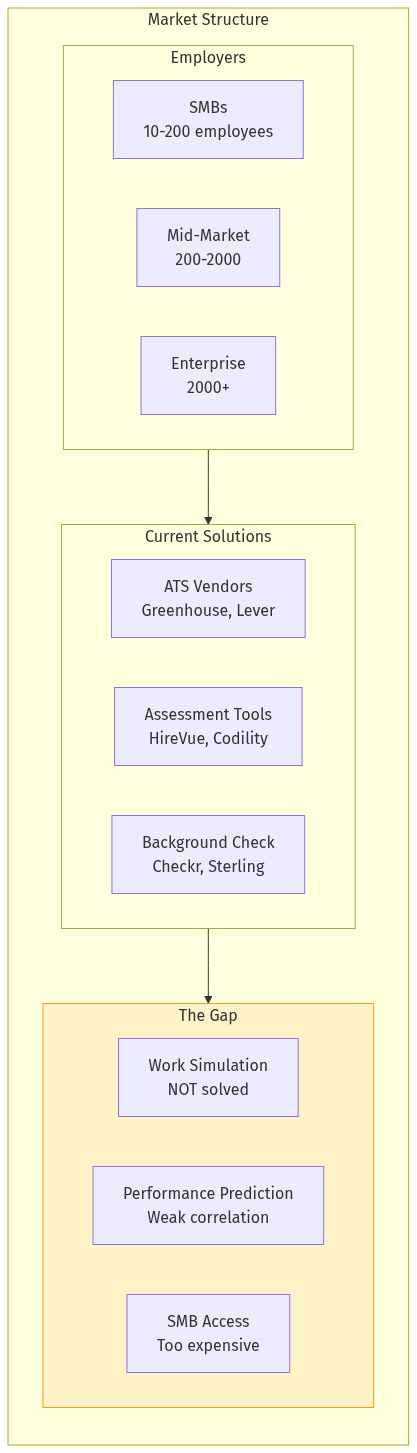

Fragmented market with offline workflows

- SMBs still hiring via "gut feel"

- No standardization across industries

AI-native advantage

- Incumbents are pre-AI architecture

- AI evaluation is the moat

Network effects at vertical level

- Start with tech hiring, expand to manufacturing, healthcare, finance

- Each vertical becomes its own data moat

Repeat purchase model

- Companies hire continuously

- High retention once integrated into workflow

Potential AIM Integration

- Hire.aim.in — skills assessment vertical

- Cross-sell with supplier qualification (same "can they do the job?" question)

- Data synergy with B2B professional directory

## Pre-Mortem: Why This Could Fail

Applying falsification and steelmanning:

Bear Case 1: Enterprises Won't Trust AI Evaluation

Counter: Start with SMBs who don't have alternatives. Enterprises follow once SMB success is proven. Offer hybrid mode with human review.

Bear Case 2: Candidates Reject Unpaid Work

Counter: Simulation design matters. 60-minute engaging tasks feel different from 4-hour take-homes. Offer paid options. Share results with candidates (learning value).

Bear Case 3: HireVue/Codility Add AI Evaluation

Counter: Incumbent architecture is interview-centric, not work-centric. They'd have to rebuild from scratch. By then, we have the data moat.

Bear Case 4: AI Evaluation Has Bias

Counter: Actually lower bias than human interviews (statistically). Multi-model evaluation reduces single-model bias. Transparency in scoring methodology.

## Verdict

Opportunity Score: 8.5/10

| Market size | 9/10 | $5.7B and growing |

| Problem severity | 9/10 | $30K+ per bad hire |

| Current solution gaps | 8/10 | Work simulation unaddressed |

| AI disruption fit | 9/10 | Core capability match |

| Timing | 8/10 | Skills-based hiring momentum |

| Competitive moat | 7/10 | Data moat takes time to build |

| GTM clarity | 8/10 | PLG + community is proven |

High-conviction opportunity. The paid trial project approach described in the r/SaaS post is already the manual version of this product. Automation and standardization of that workflow is inevitable.

The winner will be whoever builds the largest calibrated dataset of "work samples → job performance" correlations. Early mover advantage is significant.

## Sources

- r/SaaS: "Bad hire cost me over $30K" (2026-02-27)

- TrustMRR: AI Interview Copilot listing ($42K MRR, for sale)

- TrustMRR: BookedIn AI ($58K MRR, AI receptionists)

- Society for Human Resource Management: Cost of Bad Hire Study

- Glassdoor Economic Research: Hiring Benchmarks 2025

- Grand View Research: HR Tech Market Analysis 2025-2030